Sound propagation mechanics, frequency, amplitude, period, wavelength, loudness, and decibels are conceptually explained, then mathematically described.

Like many of you, I am an audiophile. My background is in applied mathematics, so I tend to think of audio in analytical and mathematical terms.

Sound can be understood and analyzed using some very elegant mathematical concepts. Using that foundation, I have been involved in a number of mathematical activities centered around sound. In this technical article, I will explain many of these concepts without getting too deeply into the mathematics. Short sections will include the mathematics, but simple, clear explanations will be provided of all concepts so that the mathematics may be skipped if desired.

The sections that appear in this paper include the following:

A Short History

Sound Propagation Mechanics

Frequency And Amplitude

A Mathematical Diversion

Period And Wavelength

Frequency Comparison

Loudness And Decibels

Another Mathematical Diversion

Comparative Loudness

Frequency vs. Loudness

Demonstration Software Program

At the time I received my Ph.D., very few universities taught courses regarding sound analysis. I was asked by several local universities to teach courses in Signal Processing. In simple terms, signal processing uses mathematical algorithms to improve or to understand any waveform traveling through any medium. Since sound is a waveform of pressure traveling through the medium of air, teaching signal processing was my opportunity to build a solidacademic and practical foundation of the synthesis and analysis of sound.

Since mathematics is used to characterize any science and especially sound, I dug right in. At the time, useful books on signal and sound processing were nowhere to be found. So, I searched journal articles, formulated implementation algorithms, lectured the students, then gave assignments using the algorithms. As a result of these assignments, the students quickly learned the meaning of various sound related concepts, the strengths and weaknesses of various algorithms, and the limitations of usage for analyzing real sounds.

My first sound related projectswere to serve as a systems engineer in the design of communications satellites. Another interesting project involving sound was in the design and implementation of the F-14 radar system. I also developed a software package for sound engineers to shape and mix music. In recent years, I have served as an expert witness in the criminal and civil courts, some cases involving loudness levels, frequencies, sound attenuation and hearing protection.

Interestingly enough, many of the concepts that are used to characterize sound did NOT come from sound engineers, electrical engineers, or computer scientists. Early use of the concept of frequency seems to trace back to the 1640s, where the termwas derived from the original Latin word frequentia – meaning "occurring often". Of course, this usage had nothing to do with sound which is a physical phenomenon. Application of the term frequency to the field of physics did not occur until 1831, when frequency referred to "rate of recurrence".

Analysis of physical phenomena as waves came from a mathematician/physicist. Jean-Baptiste Joseph Fourier was attempting to describe the physics of heat transfer and vibrations. In 1822, Fourier published The Analytical Theory of Heat.[1].This work was translated into English in 1878. In this work, Fourier claimed that any function of a variable, whether continuous or discontinuous, can be expanded in a series of sines and cosines of multiples of the variable. In 1930, this whole concept of decomposition of a wave into sines and cosines that are multiples of each other was extended to any kind of wave phenomenon characterized by frequencies.

An MIT mathematician, Norbert Weiner, mathematically proved this extension to sound in his article Generalized Harmonic Analysis [2] in 1930.Weiner’s article proved specifically that Fourier’s expansion of an arbitrary function into sines and cosines applies when the variable is a frequency. This article became the foundation for frequency analysis of sounds. By 1964, generalized harmonic analysis had become fully disseminated into the mathematics and engineering communities.

The term decibelwas created by Bell Labs in 1929 [3]. This term was initially defined to compare the level of an output to the level of an input. As originally formulatedby Bell Labs,decibel was used to compare the power of the signal out of a phone transmission cable relative to the signal into that cable, after the signal had traveled some distance. Of course, the original name was the "bel", after Alexander Graham Bell, the founder of Bell Labs. Application of the term decibel was adopted as an international unit at the First International Acoustical Conference (Paris, July 1937) for scales of energy and pressure levels.[4]. As used in the audio field today, decibel is the relative loudness of a sound when compared to the sound of human voice speaking at a whisper.

All sound initiates with vibration of an object. If the sound is the human voice, then vibration of the vocal chords initiates the sound. An instrument with strings initiates sound by vibration of the strings.

The vibrating object, such as the vocal chords, compresses the molecules in the air in front of the object. When air is compressed, the molecules in the compressed air want to expand to their original volume/separation distances. As a result, expansion of the compressed air creates a greater pressure on the surrounding air.

The expanding molecules that exert the pressure collide with the surrounding molecules. The expanding molecules have momentum because they have mass and they have velocity (obviously, since they are moving). When the expanding molecules impact with the surrounding molecules, transfer of momentum occurs. As a result of the transfer of momentum, the surrounding molecules move and become compressed themselves.

Only velocity transfers between the expanding air molecule and the surrounding impacted air molecule. At the point of impact, the mass of the expanding molecule and the mass of the surrounding impacted molecule are the same. So, in the conservation of momentum, only the velocity of the expanding molecule is transferred to the impacted molecule. A complete transfer of velocity occurs, so that the impacted molecule moves away at the same speed as the expanding molecule. The expanding molecule stops in place, restoring separation to the previously expanding molecules.

Vibration compresses the air. Compressed air expands, exerts pressure on surrounding air, which is compressed, which expands, which exerts pressure on surrounding air. As a result of these successive compressions and expansions, location of the compressed air moves over time. This movement of the area contained compressed air is called a pressure wave.

As the air is compressed, pressure begins to increase. Eventually, a maximum pressure occurs because the vibrating source has moved as far as possible in a specific direction. Once the vibrating source begins moving in another direction, then the pressure starts to decrease in the original direction and increase in the new direction.

Recall that the source is vibrating. Vibration consists of oscillation around a fixed point, such as a zero pressure level. As the vibrating object moves in one direction from the fixed point, the vibrating source compresses air in that direction. The vibrating object then returns to the fixed point and moves in another direction. Movement in this new direction compresses the air in that direction and allows the expansion of the air in the original direction. As a result of the compression in opposite directions, a pressure wave is created in both directions. This multidimensional pressure wave appears on a pressure wave plot as a positive pressure and a negative pressure.

Actually, the pressure is never really negative. Pressure is increasing to a maximum but in a different direction of movement of the vibrating source. Calling the pressure negative is simply a graphing convention to represent the pressure wave.

At some point, the pressure wave arrives at a receiver. The receiver than begins to vibrate according to the ebb and flow of the pressure wave. This ebb and flow of the pressure wave is what the receiver experiences sound.

A pressure wave is created that consists of areas of compressed air that move along a lateral path, parallel to the direction of travel. This pressure wave is the transmission of sound.

The simplest analogy is to think of waves on the ocean. Wind is the vibrating source for a wave. As the wind hits the water, wind molecules are vibrating in multiple directions. As the wind molecules impact the water molecules, the transfer of momentum to the water molecules occurs. Then a wave is formed. Each wave transfers momentum of molecules upon impact with the molecules of the static surrounding water, causing the next wave. Successive waves are caused by transfer of momentum from the previous wave. Since the wind molecules were vibrating, transfer of momentum of the water molecules takes place in all directions, resulting in wave peaks and troughs.

The same is true with sound. A vibrating object causes of transfer of momentum when air particles interact. Since the vibrations are in multiple directions, peaks and troughs occur in the pressure level.

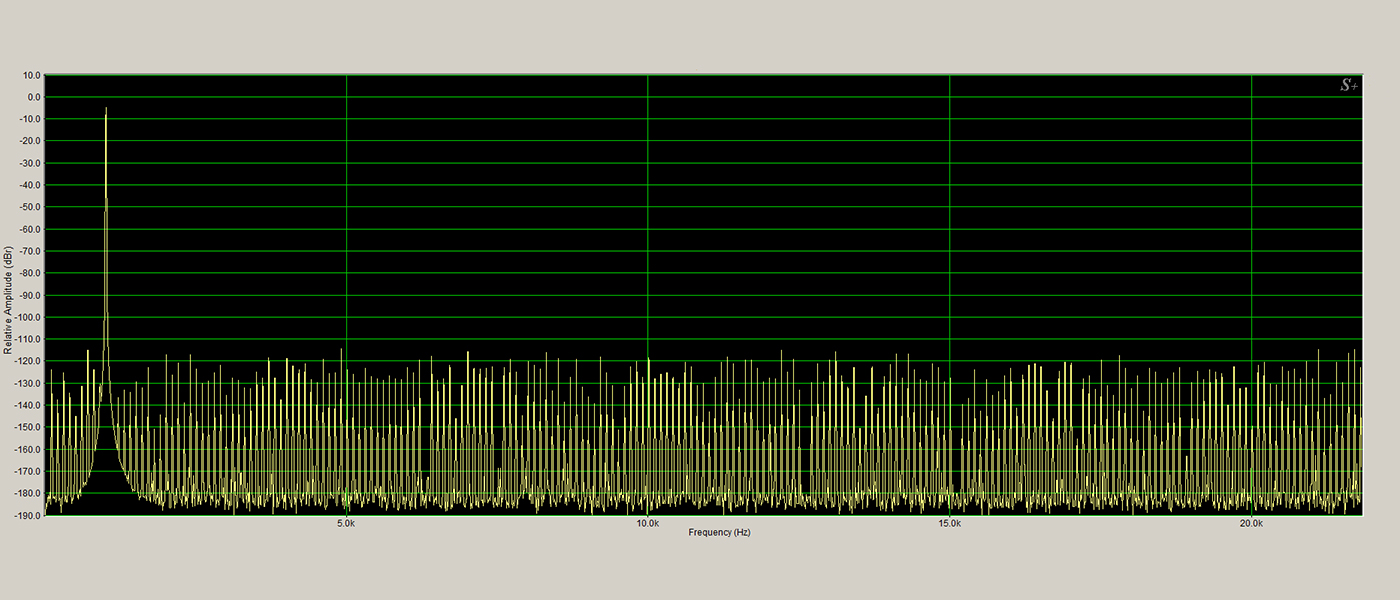

Figure 1, shown below, illustrates how sound pressure levels in a single frequency vary as the compressed air increases pressure in a positive and negative direction, resulting in the travel of sound pressure through the air.

Figure 1: Sound Traveling Through The Air

(head picture: © Sergey Strelkov, Saint-Petersburg State Polytechnic University, Russia)

(ear picture: © The McGraw-Hill Companies, Inc.)

Eventually, the sound reaches a human ear. Sound is collected by the pinna (the visible part of the ear, the auditory meatus) and directed through the outer ear (auditory) canal. The sound causes the eardrum (tympanic membrane) to vibrate, This vibration in turn causes a series of three tiny bones (the hammer, the anvil, and the stirrup) in the middle ear to vibrate.As the stapes rocks back and forth against the oval window, the oval window transmits pressure waves of sound through the fluid of the cochlea, sending the organ of Corti in the cochlear duct into motion. The fibers near the cochlear apex resonate to lower frequency sound while fibers near the oval window respond to higher frequency sound.

This whole process of a moving pressure wave initiates with the source vibration. Movement of the oscillating high and low pressure areas is then a consequence of conservation of momentum as the pressure wave moves through air. In other words, transmission of sound is an application of Newtonian mechanics — conservation of momentum that occurs when high pressure molecules impact static, non-moving molecules.

Sound pressure levels transmitted from a vibrating source depend on the density of air. In simple terms, less dense air possesses fewer molecules to compress by the vibrating source. Clear, more dense air, possesses more molecules to compress by the vibrating source. More or less molecules being compressed results in a greater or lesser transfer of momentum to the surrounding air. Density of air is controlled by temperature and air pressure. Studies have shown that the density of air is pretty much unaffected by humidity. For instance, the same source transmits a different sound pressure level in high and low temperatures.

For a mathematical analysis of the pressure wave transmission based on conservation of momentum and the pressure level dependency on density of air, see "Chapter 47: Sound. The Wave Equation" in the The Feynman Lectures on Physics, Volume 1. This chapter can be found online at The Feynman Lectures.

Pressure levels exerted as the sound wave propagates through the atmosphere are expressed as Pascals (Pa). A Pa is equal to one Newton per square meter or approximately 0.000145 pounds per square inch (PSI). Both Newtons and pounds are expressions of the amount of force. So, the Pa unit describes the amount of force exerted equally over a specific area — square meter or square inch. Force being involved in the movement of sound should come as no surprise at this point. When a moving molecule impacts a static molecule, as occurs in the movement of the sound pressure wave, the moving molecule exerts a force on the static molecule. This force is what directly causes the conservation of momentum. Think of swinging your fist at an object. That fist exerts a force over an area that has an effect on the impacted object. In the case of air molecules, the impacting molecule is your fist. The impacted molecules are the object. The impacted molecule moves, the impacting molecule stops.

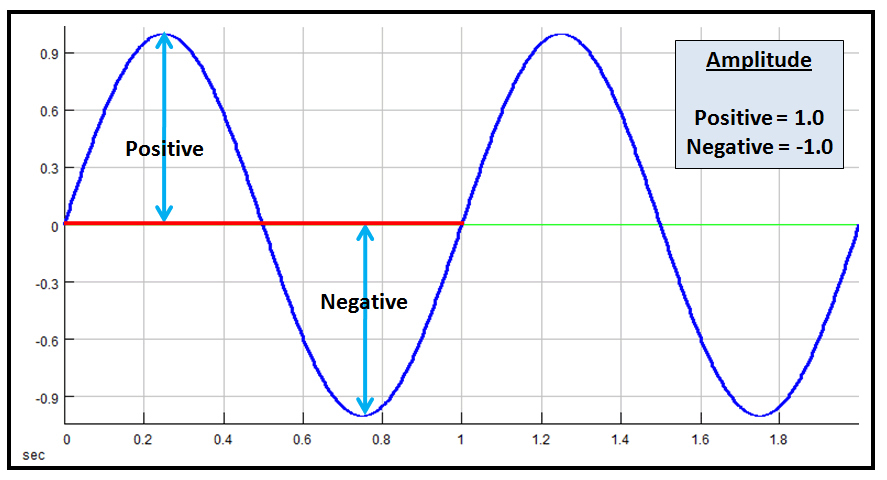

Waveforms consisting of crests and troughs of sound pressure level are described by two parameters: frequency and amplitude.

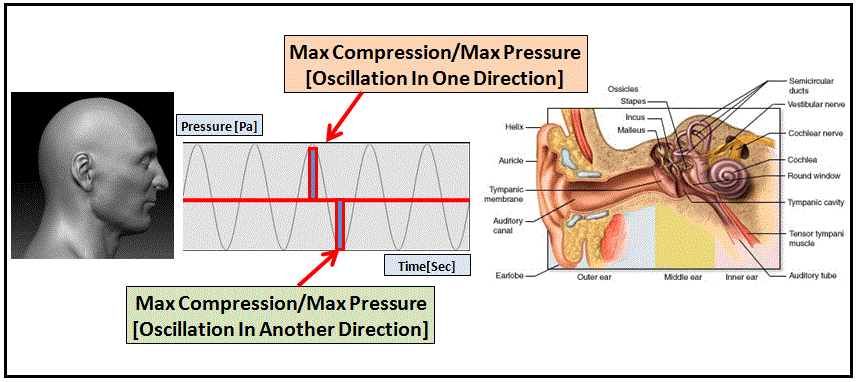

Frequency of a sound waveform is the number of cycles over a fixed period of time. A waveform cycle is identified by the sound pressure level (SPL) of the air molecules moving from zero to positive maximum,back to zero, to negative minimum, and back to zero.

Figure 2: Frequency of a Sound Waveform

With sound waveforms, frequency is generally identified as cycles per second or Hertz (Hertz is named after Heinrich Rudolf Hertz, a 19thcentury physicist. The term Hertz replaced “cycles per second”, so 40 Hz means 40 cycles per second). Figure 2 presents a very simple example of a sine wave with the cycle identified. In this figure, a single cycle of the sound wave is 1 second. This wave goes from zero to maximum to zero to minimum to zero in 1 second. So, the frequency is 1 cycle per second, or 1 Hz.

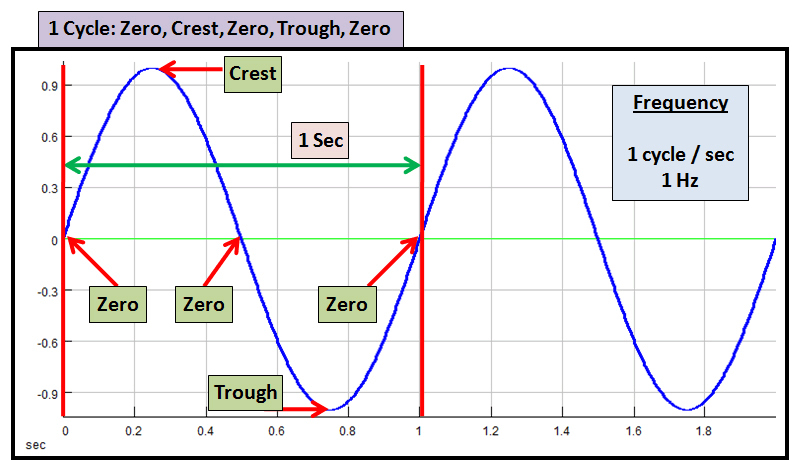

Amplitude is the pressure level of the moving air from a zero reference line. Since sound waves have crests and troughs, sound waves have a maximum amplitude in the positive direction (crest) and a minimum amplitude in the negative direction (trough).

Figure 3: Amplitude of A Sound Waveform

In Figure 3, the positive and negative amplitudes are identified. Units of amplitude represent the number of Pascals (Pa) of pressure. However, since the actual pressure level for an actual sound wave can be quite high, the amplitude of a sound wave is often represented in a range of -1 to 1 or 0 to 1. In this example, the positive amplitude of a crest is 1.0. And, the negative amplitude of a trough is -1.0.

Translating actual pressure level in Pa into an smaller range for representation does have one negative side effect. Actual pressure levels of the sound wave are lost. However, if the pressure levels range from -10,000,000 Pa to +10,000,000 Pa, then the vertical axis of the graph would become messy, unwieldy, and perhaps difficult to read. So, loss of the actual pressure levels is insignificant when compared to the readability of the sound wave graph.

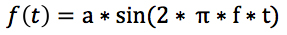

Both of the waveforms in Figures 2 and 3 can be mathematically described. These waveforms are sine waves. The sine wave plays a big role in sound analysis, as will be discussed at a later time.

Mathematically, the sound waves in the figures can be defined as follows:

where

In both Figures 2 and 3, these formulas have been applied with a = 1.0 and f = 1 Hz.

Some authors claim that frequency and wavelength are required to specify a simple waveform. This statement is not correct. Only maximum amplitude and frequency are necessary to specify a waveform.

Once frequency has been determined, two other characterizations can be calculated.

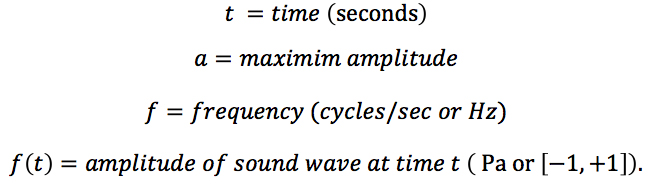

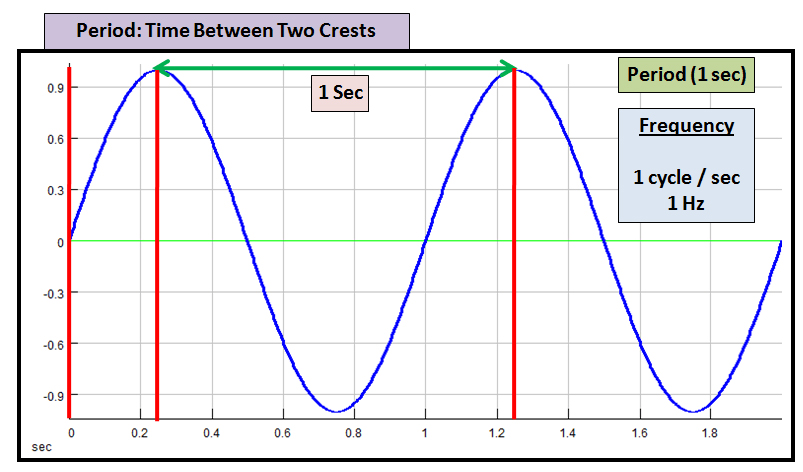

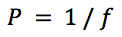

Period is the time in seconds between two crests of a waveform. Period can also be determined between two zero crossings or two troughs.

Figure 4: Period of a Sound Waveform

A simple relationship exists between period and frequency:

where

In Figure 4 above, a simple sound wave consisting of a sine wave is displayed. This sound wave has a frequency of 1 Hz. Using the formula above, this sound wave has a period of 1 second (1/1 Hz). A 50 Hz signal would have a period of 1/50 sec or 20 milliseconds.

Wavelength is a completely different quantity than period. Wavelength is the the length of the beginning of the postive pressure to the end of the negative pressure. Distance traveled is dependent on the speed at which sound travels through the atmosphere. Sound travels at a speed of 340.29 meters/second at sea level.

Speed of sound varies depending on the density of the atmosphere. Both temperature and atmospheric pressure effect the speed of sound. Studies show that humidity does not affect the speed of sound.

As with period, a simple relationship exists between wavelength and frequency.

where

Meters per second are generally used in this equation. In the example in Figure 4, the wavelength is 340.3 meters for 1 Hz.

The period of a sound wave is time elapsed, wavelength of a sound wave is the length of the beginning of the postive pressure to the end of the negative pressure. Some authors mistakenly and inaccurately use the terms interchangeably. Clearly, these terms have very different meanings.

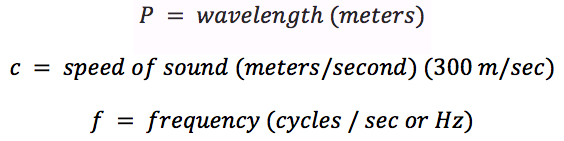

Different frequencies experience a different number of crests and troughs within that same cycle time of 1 second. For instance, a sine wave frequency of 1 Hz will show one crest and one trough within a 1 second period. By comparison, a sine wave frequency of 3 Hz will exhibit three crests and three troughs within a 1 second period.

Figure 5: Comparison of Two Sine Wave Curves

Figure 5shows the number of peaks and crests within a single period of 1 second for two different frequencies, 1 Hz and 3 Hz. The two frequency graphs are shown for a single period and aligned vertically in order to easily compare the number of peaks and troughs. The 1 Hz sine wave frequency on the top exhibits a single crest and trough during the period. On the bottom, the 3 Hz sine wave frequency shows three crests and three troughs during the same exact period. The comparative number of crests and troughs between two frequencies will be at the same ratio of the frequencies themselves.

Many sounds have clearly identified frequencies. A human ear can detect human voice frequencies in the range 85 Hz to 255 Hz, depending on whether the person is male or female.

Pure musical tones also have frequencies. For instance, the 4th octave starts with the note middle C, called C4, which has a fundamental frequency of 261 Hz. This same octave contains the note A4 which exhibits a fundamental frequency of 440Hz. And, this octave ends with the note B4, a sound with afundamental frequency of 494 Hz.

When a sound consists of a single frequency, the note is often called a pure tone. Musical instruments produce sounds that have a pure tone (fundamental frequency) and harmonics (multiples) of that fundamental frequencywhich are unique to the particular instrument, allowing differentiation between a clarinet from a trumpet.

The concept of pure tonewill be discussed later in this article.Fundamental frequency and harmonicswill be discussed in a future article.

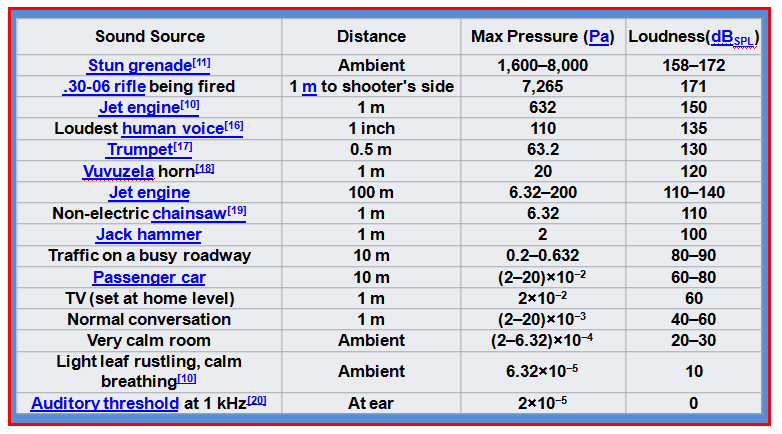

Every sound moves through the atmosphere. This movement takes the form of a sound pressure wave. Each sound pressure wave has a maximum sound pressure level that varies from positive to negative, expressed as Pascals (Pa). Some examples of maximum sound pressures appear below in Figure 6.

Figure 6: Comparison of Two Sine Wave Curves

Source: See Wikipedia References indicated by superscripts

Normal conversation sound pressure ranges from 0.002 Pa to 0.2 Pa. In comparison, a trumpet sound pressure reaches a sound pressure level of 63 Pa. A jet engine sound pressure level reaches a sound pressure level of 632 Pa. These pressure levels tell you that the human voice exerts less pressure on the surrounding air molecules that a trumpet. And, the trumpet exerts less pressure on the surrounding air molecules than a jet engine.

Of special interest is the auditory threshold of human hearing, universally recognized as 2 x 10-5Pa or 20 µPa, defined as p0 in the equations below. Auditory threshold is the lowest sound that can be detected by the human ear. This sound level is considered to be the sound level of a person whispering.

Using sound pressure levels, a standard definition has been defined for the loudness of a sound. Recall that initially the decibel was originally defined as the ratio of an output power level to an input power level. This concept has been extended to relate loudness of sounds in terms of relative sound pressure levels. Loudness is defined in terms of the ratio of a sound pressure level (serving the role as output power) to the auditory threshold sound pressure level (serving the role as input power). Clearly, sound pressure level is NOT the same as power. However, the underlying purpose of loudness is to make relative comparisons of the sound pressure level. So, using sound pressure level in place of power in the definition of decibel makes sense.

Figure 6 also contains the relative loudness of each sound source in decibels (dB), as calculated from sound pressure levels (SPL) of the sources. Since the auditory threshold is the foundation for decibel calculation, the calculated dB (SPL) is 0. Normal conversation is 40-60 dB (SPL). These values indicate that normal conversation SPL is significantly greater than the auditory threshold. A trumpet has a computed dB (SPL) of 130. Clearly, a trumpet is has an even greater sound pressure level than either normal conversation or the auditory threshold. Finally, dB (SPL) for a jet engine is 140. Obviously, a jet engine will exert even greater pressure on the surrounding air molecules than a trumpet, a normal voice, or a voice at the auditory threshold.

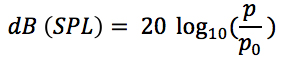

Calculation of the decibel level of a sound pressure level is computed by the following equation:

where

dB (SPL) values computed by this formula are commonly called the loudness of the sound.A sound source with a higher dB (SPL) level is considered to be a louder sound.

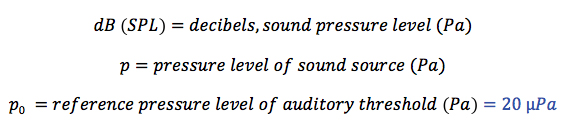

A simple example calculation demonstrates use of this formula. Using the values in Figure 6, for a trumpet, the decibel level, dB (SPL) calculated by the formula is as follows:

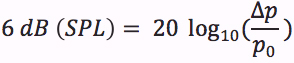

From this formula, a number of loudness relationships can be determined. An increase of 6 dB (SPL), doubles the loudness of the sound.

where ∆p = change in SPL, in µPa

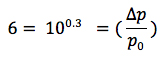

And, an increase of 10 dB (SPL) triples the loudness of the sound.

Using this approach, the increase in loudness of two sounds can easily be compared.

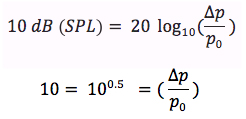

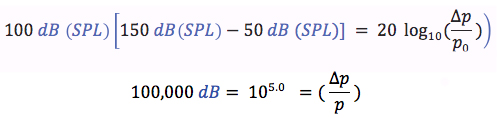

Using these relationships and values from Figure 6, some exact loudness comparisons can be shown. A normal conversation has an average loudness level of 50 dB (SPL). Jet engines experience a loudness level of 150 dB (SPL). Using the formula above:

A jet engine is 100,000 times louder that a normal conversation. This increase in loudness should not be surprising. When standing next to a jet engine where this loudness was measured, holding a conversation is impossible. In fact, In the absence of hearing protection, a listener experiences pain and causes huge damage to hearing. The level at which pain is experienced has been measured to be 150 dB (SPL) [5].

Frequency and loudness are two different characterizations of sound. Frequency is an absolute value that describes the oscillations of a vibrating sound source. Loudness is a relative value that compares the sound pressure level of the sound wave of one sound with the sound pressure level of a sound at the auditory threshold.

Changing the loudness of the sound has no effect on its frequency. The loudness can be decreased or increased but the loudness will not change. This independence occurs because frequency describes oscillations while loudness describes sound pressure level relative to the auditory threshold.

A general sound consists of the combination of multiple frequencies. This fact was determined by Wiener in his application of the wave theory of Fourier to frequency variables. [See History section above]. Loudness of a specific frequency within a specific and general sound cannot be determined. This limitation is a result of modern sound recording equipment. Loudness can be determined for the complete sound, not for frequencies within a complete sound.

However, loudness of a frequency can be determined if the sound consists of a single frequency. A sound that consists of a single frequency is called a pure tone. Audiologists use pure toneswhen testing a person’s hearing.

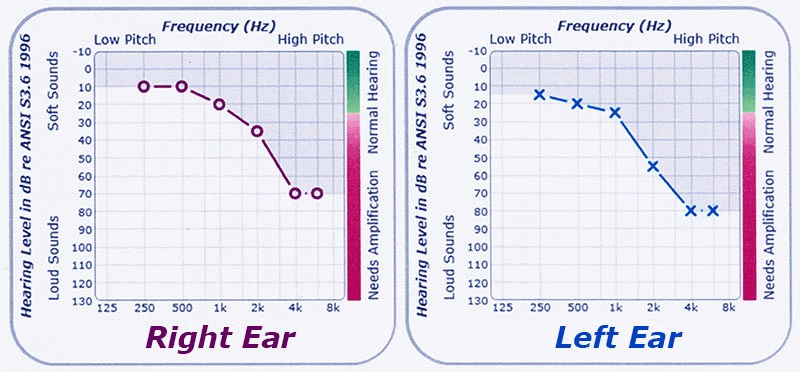

Figure 7 shows an audiogram, the results of a hearing test performed by an audiologist.

Figure 7: Hearing Test Audiogram

Source: resonancehearingaids.com/audiogram-hearing-tests

Each ear is individually tested. Look at the tests for the right ear. A range of test frequencies appears along the x axis of the test results. Along the y axis, a range of loudness levels is shown. These levels are expressed in dB (SPL), the loudness measurement.

In the center of the graph, a plot appears. Each circle represents the minimum loudness level that the listener was able to hear sound at the tested frequency. For instance, at 1000 Hz (1k) along the bottom axis, the listener first heard the sound presented at a loudness of 20 dB (SPL). According to the standard criteria for sound hearing capability, this listener needs amplification at this frequency.

In this situation, a loudness in dB (SPL) is related to a frequency. This result can be determined because the audiologist is under control and presents a single frequency — a pure tone. Audiology testing equipment enables an audiologist to set a single frequency, then vary the loudness starting at a very low dB (SPL). Loudness is varied until the listener indicates that the sound is first heard. This loudness level is then entered into the audiogram at the specific frequency.

Again, this situation is a very special, controlled situation. Loudness of a specific frequency within a specific and general sound cannot be determined. Relationship between loudness, general sounds, and component frequencies will be addressed in a future article.

I recently had a civil case in which the nature of sound was an issue. A number of sound related concepts were used in analysis of the evidence in the case. One of the goals during testimony is to demonstrate the sound related concepts in a meaningful and palatable manner, in addition to all of the technical details. After ruminating on this goal for a while, I had a brainstorm. Use sound itself to demonstrate some of the basic issues and principles.

So, I collected a set of sound files and wrote a piece of software to enable demonstration of the sound related concepts. I am making the software and sound files available for readers of these articles.

This is a demonstration program developed to illustrate principles explained in these articles. Normal user interface protections have not been programmed as would be the case if this program were production quality. Please follow the directions as indicated.

You can download a zip file containing the software and the sound files. See the download instructions in the appendix to this article. PLEASE READ THE DOWNLOAD INSTRUCTIONS CAREFULLY!

Note: SECRETS of Home Theater and High Fidelity disclaims any responsibility for the operation of this demonstration software, developed by Dr. Krell of SW Architects.

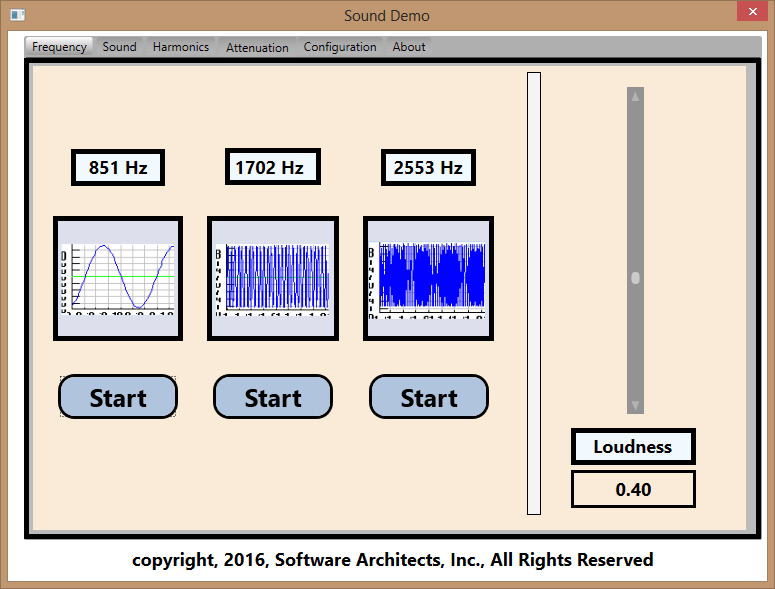

Figure 8 shows the user interface to the sound demonstration program.

Figure 8: Sound Demonstration Program User Interface

This user interface is organized around tab pages. Each tab page is used to focus on demonstrating specific sound principles. The first tab page is labeled "Frequency". This specific tab page is designed to illustrate pure tones and loudness associated with a single pure tone, as utilized by audiologists.

As a minor note, the loudness level is represented in the range 0.0 to 1.0. Obviously, this range is not dB (SPL) but a representation of the range of dB (SPL) values. This range was used for ease of programming. Actual dB (SPL) will depend on the settings for your sound card.

As shown above, three (3) pure tones are associated with this tab page: 851 Hz, 1702Hz, and 2553Hz. In order to hear that loudness can be associated with a single pure tone, perform the following steps:

- Push any start button

- Drag the thumb of the slider on the right to adjust the loudness

- Push the stop button that appears

- Make sure that you stop a playing sound before you start a new sound

- Make sure that you stop all sounds before you exit the program

As you move the loudness slider to increase and decrease the loudness, the frequency remains exactly the same.

For now, please stay away from the other tabs. These tabs are meant to demonstrate sound concepts that will be discussed in future articlesthe other tabs are hidden. As future articles utilize the features of this demonstration program, these tabs will be revealed.

An alert reader might notice that the two larger frequencies are multiples of the first frequency. This relationship is intentional. Ah, but this relationship is one of the subjects of the next article!!!!!

[1] Fourier, Joseph. "The Analytcal Theory of Heat" Translation by Alexander Freeman, Cambridge: At the University Press, London, 1878.English translation of French mathematician Joseph Fourier’s "Théorie Analytique de la Chaleur", originally published in French in 1822

[2] Wiener, Norbert, “Generalized Harmonic Analysis”, Acta Mathematica. 55 (1): 117–258, 1930.[3] W. H. Martin, Decibel–The name for the transmission Unit, Bell System Technical Journal, January 1929.

Available online at www3.alcatel-lucent.com

[4] The First International Acoustical Conference, Nature, volume 140, page 370 (August 28, 1937).

[5] Nave, Carl R. (2006). “Threshold of Pain”. HyperPhysics. SciLinks. Retrieved 2009-06-16.

Wikipedia References:

10. Winer, Ethan (2013). "1". The Audio Expert. New York and London: Focal Press. ISBN 978-0-240-82100-9.

16. Realistic Maximum Sound Pressure Levels for Dynamic Microphones – Shure

18. Swanepoel, De Wet; Hall III, James W; Koekemoer, Dirk (February 2010). “Vuvuzela – good for your team, bad for your ears” (PDF). South African Medical Journal. 100 (4): 99–100. PMID 20459912.

19. “Decibel Table – SPL – Loudness Comparison Chart". "sengpielaudio". Retrieved 5 Mar 2012.

20. William Hamby. “Ultimate Sound Pressure Level Decibel Table”. Archived from the original on 2010-07-27.

Original Citations From Wikipedia

Appendix

Sound Demonstration Software Program Download Instructions

THIS PROGRAM IS FOR WINDOWS 7 AND GREATER ONLY!!!

EVEN WITH THOSE VERSIONS, YOU MAY BE ASKED TO DOWNLOAD NEWER WINDOWS COMPONENTS.

- Open a browser of your choice.

- Enter the address below into the address bar of your browser

www.swarchitects.com/SoundDemo - Right click on the file name below:

xnafx40_redist.msi - A popup menu will appear.

Select the menu option that allows you to download to your hard drive.

This option is different for every browser. - Right click on the file name below:

SoundDemo.zip - A popup menu will appear.

Select the menu option that allows you to download to your hard drive.

This option is different for every browser. - Use Windows Explorer to navigate to the folder where the files were saved.

- Double click on the msi file to install this required library.

If you do not install this library, the program will crash! - Open the zip file.

Extract the contents of the zip file to a folder. - Use Windows Explorer to navigate to the folder where the zip file was extracted.

- Double click on the file SoundDemo.exe.

Make sure that you choose the proper exe file.

One file that has this extension is a configuration file. - DO NOT MOVE OR REMOVE ANY OF THE FILES OR FOLDERS.

- If the loudness level is dim when the thumb of the slider is dragged, adjust the sound level of the sound card.

WARNING

Both the download instructions and the program have been reasonably tested. However, errors may occur. Please forward any problems/issues with the program to me at: Bruce Krell, [email protected]

About the Author: Bruce E. Krell, Ph.D

Bruce E. Krell has a BS in Math and English from Tulane University, an MBA in Management and Economics from the University of New Orleans, and a PhD in Applied Math from the University of Houston. Over the years, Bruce has worked in a variety of areas, both as an Applied Mathematician, an Applied Physicist, and as a Software Engineer. Areas in which Bruce has worked include communications satellites, spy satellites, missile guidance systems, radar manufacturing, 2-3 and 3-D microscope engineering, video engineering and analysis, sound engineering, trajectory physics, structural engineering, civil engineering, and FDA Drug Testing, among others. Over the years, Bruce has written 6 books on system engineering, software engineering, and forensic science. During his career, he has given lectures and workshops all over the US and Europe, in many of the areas in which he has worked.

The author wishes to thank Dr. David A. Rich for his contributions to this article.