HDR and Dolby Vision: What They Are and Why You Will Want Them Highlights

Previously known only in the realm of digital still (snapshot) photography, High Dynamic Range (HDR) is coming to your television in the near future. As we speak, standards are being decided upon, prototype displays have been built and tested, and Dolby is even adding it to its emerging Dolby Vision standard. As displays increase their pixel density, expand their color gamuts and support faster frame-rates; dynamic range is the one frontier that has yet to be fully explored. In this Article, we’ll discuss exactly what HDR is, how it might be implemented, and why you will want it.

HDR and Dolby Vision: What They Are and Why You Will Want Them Highlights Summary

- Expanding a two-dimensional image’s dynamic range makes it more realistic and 3D-like.

- Current displays are too limited in contrast and peak output to accurately portray realistic moving images.

- By adding greater control of brightness at the pixel level, true intra-image contrast ratios of over 12,000:1 can be realized.

- And yes, you will need re-mastered content and a new media player and a new display.

Introduction to HDR and Dolby Vision: What They Are and Why You Will Want Them

Ask the majority of AV enthusiasts about HDR and you’ll likely get explanation of the features built into the latest digital snapshot cameras and smartphones. In the photography realm, High Dynamic Range lets you to take properly-exposed pictures that compensate for difficult lighting conditions, and allows every object to be seen in detail, regardless of how bright or dark it is. Think of a scene where your subject is standing against a bright background. A typical camera has to choose between either exposing for the subject (washing out the background in a splash of white) or exposing for the background (rendering the subject as a detail-less dark silhouette).

An HDR camera solves this problem by taking two or more exposures in sequence, then combining them into a single picture that shows you every detail. The technology has become so common in still photography today that even cheap smartphones have it.

What if you could apply that same principal to a television display? Imagine if your TV had a true pixel-level contrast ratio of over 12,000 to 1? The darkest black would be 0.08cd/m2 (candelas per square meter), and the brightest white would be 1,000cd/m2, in the same image. Considering the most powerful LCD backlights create around 350cd/m2, that’s a serious amount of output and an even more serious level of contrast. The current trend in expressing luminance from the screen is “nits”, where 1 nit is equal to 1cd/m2. Foot-Lamberts has also been used in the past to describe luminance.

Some consumer displays can go much brighter. LCDs can go very bright. The Sharp THX LCD does about 1,200 nits. Plasma and OLED can’t go nearly as bright. On these displays, regardless of how bright they are, the standard for SDR (Standard Dynamic Range) content is 100 nits, and so all of these displays should be calibrated for 100 nits.

Right about now, Pioneer Kuro owners are saying, “Hmmm, my TV rocks out about 25,000:1 native contrast; how is this better?” What we are talking about is intra-image contrast, not the on/off figure so often quoted in display specs and reviews. A common test for this is the ANSI checkerboard pattern, where black and white squares are measured to determine a more real-world contrast ratio. That same Pioneer Kuro measures closer to 8,000:1 in that test. And the average edge-lit LCD panel comes in at around 3,000:1 in its native state. It should be noted that the ANSI test is not ideal because of its 50-percent average picture level (APL). Real-world content rarely exceeds 20-percent so a new procedure outlined in a proposed spec from the International Telecommunications Union (ITU), titled “Specification of a signal for measurement of the contrast ratio of displays”, BT.815, addresses this issue. Using a new test pattern, future displays will likely be benchmarked by this method.

We are going to cover some of the proposed standards for implementing HDR in consumer displays: principally those associated with Dolby Vision, which is in development as a new set of specs for contrast, gamma, color, frame-rates, resolution, and bit-depth. Let’s take a look.

HDR is best-known as a technique for still photography. In fact, it was first used in the 1850s by French photographer Gustave Le Gray. He understood the dynamic range limitations of film negatives and used two separate exposures to create images that overcame those limits.

The above photo is a daguerreotype (the photo-sensitive emulsion was on a metal plate) from 1855. The film used had an exposure range of about three f/stops. One stop is twice as bright or dim than the next stop. Under those circumstances it would be impossible to retain detail in both the ocean and the sky. The light level of the sky is obviously far higher than that of the water. A normal exposure would either wash out the sky to allow the boat to be visible; or to retain the cloud details, the ocean would be too dark and the boat would be invisible. Le Gray simply combined two exposures along with some creative dodging and burning in the printing process to create one of the earliest examples of HDR.

Other photographers like Ansel Adams, used dodging and burning exclusively to extend the tonal range of their images. By selectively covering (dodging) or exposing (burning) different parts of the image, he could allow details to come through that would be invisible in a normal print. This technique was ultimately defined as the Zone System.

To capture the tonal range shown above with a single film exposure and print would be impossible. Only careful manipulation of the various dark and light zones can produce this level of dynamic range. Adams took a nearly century-old technique and elevated it to an art form.

The goal of all this was, and is, to create images that more closely portray what the human eye sees. With the rods and cones in our retina, plus our brain’s visual cortex ability to process the image’s dark and bright regions separately, we are able to see the complete range of brightness levels. A camera, whether it captures a still or a moving image, cannot do this.

With the advent of digital photography, it became possible to add these darkroom techniques to the software controlling the camera’s imaging sensors. Those sensors are bound by similar limitations to film in that their dynamic range is somewhat limited. But since it’s only a matter of setting a digital camera to take multiple exposures and combine them into an HDR image, we can now have something that used to require hours in a darkroom happen in mere seconds in a smartphone.

Here is an example of how four separate exposures are combined into a single tone-mapped image.

Each subsequent exposure adds detail to the St. Louis arch and capitol building. By the time you get to number four, you can see the clouds in the sky but the building and arch are completely washed out. Now see what the combined result shows. This is called “tone mapping.”

You can see every detail in the darkest and lightest portions of the image, just as your eye would see it by adapting to the extreme light and dark levels. The final picture has only the best elements of each exposure while throwing away the parts without usable detail.

Obviously the ability to do this in a moving image has profound implications. If you’ve read our display reviews here at Secrets, you know that contrast and dynamic range are the most-important metrics in determining image quality. The best displays can render deep blacks and bright whites simultaneously to show every detail and provide a sense of depth that rivals even the best 3D presentation. But even the greatest of those can only achieve around 8,000:1 intra-image contrast. HDR promises even more, and it won’t require an exotic display with hand-selected parts to make it happen.

It’s becoming apparent already why you would want HDR in your display. As great as today’s televisions and projectors look, there is still vast room for improvement, especially in the area of dynamic range. The industry is currently busy adding pixels, but ultimately, higher resolution won’t produce the kind of image that’s possible with HDR.

To understand what will be required of tomorrow’s displays, we have to look at the limitations of the technology we already have. There are two main categories of flat panels currently used in consumer-grade HDTVs: light-valve, represented by LCD, and light-emitting, represented by OLED. Since LG and Samsung both stopped manufacturing plasma panels last year, OLED remains the only light-emitting technology left standing.

In an LCD panel, the light comes from an array of LEDs or in older sets, a cold-cathode fluorescent tube. The LEDs can be arranged at the edges of the panel, or in more expensive displays, directly behind the thin-film transistor layer in zones. To modulate light levels, the liquid crystals in each pixel are twisted to either block or admit light. You can see the inherent flaw in this approach is that the light is always present, and the pixels can never completely block it. Plus there is always a little light bleed between adjoining pixels that serves to reduce intra-image contrast.

The most difficult thing for any LCD to do is produce convincingly deep blacks. Panel technology has been tweaked and massaged for years to the point where the best TVs can manage a native contrast ratio of around 5,000:1 on/off and perhaps 3,000:1 intra-image as measured by the ANSI checkerboard pattern. It’s likely that the limit has been reached, or is close at hand, for how black an LCD panel can become.

The next best way to increase contrast then is to bump up the other end of the scale. High contrast doesn’t necessarily have to include super-low black levels. If you can produce a sufficiently high white level, the perception of contrast is correspondingly higher. This is exactly what HDR for video displays proposes to do. Rather than drop black levels any further, an HDR display will have a far higher white level, on the order of 1,000 nits or more.

Before we go further, let us remind you what a nit is and why it’s important. As we mentioned at the beginning, light output from displays previously has been measured in either footLamberts (fL) or candelas per square meter (cd/m2). The latter is also known as a nit – 1 cd/m2 = 1 nit.

A typical LCD panel can produce a full-white field of around 350 nits. Some can exceed 400, and we have seen displays that put out 4,000 nits, but they are not consumer products. Dolby Vision proposes 1,000 nits. Now that is some serious brightness, but if it can be achieved on a consumer TV, it will drive intra-image contrast up to the 12,000-14,000 to 1 range.

So how can we do this? Obviously you’ll need to have at least close to pixel-level control. An array of LEDs arranged at the edges of a panel won’t cut it. They can be selectively dimmed, but only very large zones of the screen would be affected. There are two kinds of prototype displays we’ve heard about that can manage it. For reasons of non-disclosure we can’t be too specific with brands or specs.

The first one is called IMLED or Individually Modulated LED. This is like a tabletop version of the jumbotron screens seen at stadiums and arenas. It is a light-emitting display which means each pixel emits its own light; there is no backlight. Each pixel is a single LED that can be modulated. The likelihood of this technology finding its way into your living room any time soon is quite small. Now you’re probably saying, “What about OLED?” OLED has a similar trait with plasma in that as you light up more pixels, you increase the load on the power supply. To keep the TV from overheating, or requiring a massive power supply, output must be throttled as the average picture level increases. The bottom line is 1,000 nits probably won’t happen from OLED in its current form.

At Cine Gear, Sony was showing a pro-grade 4K reference monitor, the BVM X-300. It’s a $42,000 OLED panel capable of 1,000 nits, but only for very small portion of pixels. As you increase the average picture level, the brightest pixels become dimmer. OLED is going to struggle with HDR.

With OLED not up to the task and IMLED still in the development stage, that leaves traditional LCD. The one consumer-level prototype we’ve learned of is an LED-backlit panel with 384 zones that can be controlled separately. This is obviously not ideal because when an image becomes more detailed, there will be halo effects when an object is smaller than the zone it occupies on-screen. In the pro-monitor category, Dolby’s PRM-4200 and 4220 reference displays employ 1,400 zones. While not ideal, both products are a step in the right direction. Bringing this kind of bleeding-edge technology into the home always involves compromise.

Now that you have some idea of what hardware is required to support HDR, let’s answer the big question: how will the content get to my screen?

In 2007, Dolby acquired a small company called BrightSide Technologies. They were responsible for creating the world’s first prototype HDR display, the DR37-P. This monitor uses individually modulated LEDs for each pixel that produce 256 levels of light from zero to almost 4,000 nits. Since the LEDs can be turned completely off, the contrast ratio is theoretically infinite. When the LEDs are energized to their dimmest power-on state, the ratio is around 200,000:1.

Since then, Dolby has developed Vision technology into a whole list of standards, of which HDR is a part. It’s a potential way forward for future display tech that includes the wider Rec. 2020 color gamut, HDR, Ultra HD resolutions (3840×2160), higher frame rates, and a new way of defining gamma, called PQ. PQ stands for Perceptual Quantizer and is defined by SMPTE 2084. All of this is designed to take moving pictures to the next level beyond today’s HD and standard dynamic range content.

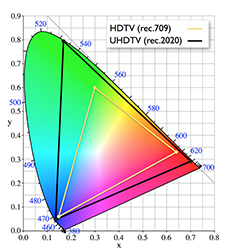

Let’s pick this apart a bit. You may have heard of the proposed Rec. 2020 color gamut spec for Ultra HD. It allows for an even greater color palette that provided by the Digital Cinema Initiative and is substantially larger than today’s Rec. 709/sRGB standard.

The CIE chart shown above represents all the colors of the spectrum visible to the eye. The yellow triangle is what our current crop of HDTVs can display, and the black triangle is the proposed spec for Ultra HD displays,and it’s part of the proposed Dolby Vision spec.

Obviously, to show smooth color gradations and transitions, an increase in bit-depth is required. Current content is encoded using 8 bits per channel (24 bits per pixel). That means a total of 256 (0-255) shades (brightness levels) are possible for each color. Video encoding limits that range to levels 16-235, so there are fewer gradations. The Rec.709 gamut includes enough possible colors so that banding artifacts are reduced to the point of invisibility. If you see banding on your TV, it’s more likely the result of file compression, not insufficient bit-depth.

Increasing the bandwidth to 10 or 12 bits has been discussed for years but never actually implemented in home video content until recently. There are 10 and 12-bit displays available, but they can only up-convert the incoming signal. All of the Ultra HD content on Netflix and Amazon is encoded in a 10-bit 4:2:0 format using HEVC compression.

Both the larger color palette of Rec. 2020 and the increased dynamic range of HDR will require at least 10-bits per channel to ensure smooth transitions from dark to light and saturation levels. With 1,024 levels of luminance available, the palette increases to 1.07 billion possible colors.

Of course that will mean unless we want yet another disc format, we’ll need a better form of compression to keep those video files to a manageable size. Fortunately that’s already taken care of thanks to HEVC. It offers double the compression rate of the currently-used H.264/MPEG-4 AVC standards and supports resolutions up to 8192×4320 pixels. It’s already in use in streaming applications where bandwidth is the most limited. Once it’s employed in Blu-ray formats, it will be feasible to encode higher resolutions and bit-depths onto existing optical discs.

The final component of Dolby Vision (for the purposes of this discussion) is Perceptual Quantization (PQ). This is what is proposed to replace gamma as the guideline for how luminance levels should be encoded in content and displayed on our televisions. Sony has already implemented PQ in the BVM pro-displays mentioned above. They showed it at Cine Gear recently for an SDR vs HDR comparison.

Gamma is a concept that goes back to the earliest CRT displays. Quite simply, content is mastered to the capabilities of the television. Since light output from a cathode-ray tube rises at a progressive rate, video content must be mastered to match this in order to retain the correct dynamic range at all average picture levels.

Of course CRT is effectively dead, and we are no longer bound by this limitation. In the digital realm, gamma can be anything you wish. But in order to maintain the relationship between content creation and end-user displays, the power function method for calculating gamma has remained in place. The only modification to this so far is the Rec.1886 standard which uses an average gamma value of 2.4. (Gamma specifies the rate at which black transitions to white with respect to the input signal)

What PQ proposes to do is eliminate the fixed relationship between signal input and output levels. By specifying brightness levels rather than signal levels in content, the dynamic range can be adjusted to the capabilities of the display. The principal benefit is that when you adjust your TV’s peak output to fit your unique viewing environment, content encoded with PQ will show its full dynamic range regardless of surrounding conditions. In theory it would eliminate the dark murky images that plague some contemporary Hollywood films. Your properly calibrated television would show all the detail present in the image in a manner suited to your particular room. It will also more closely match human visual perception. We’ll no longer be bound by a decades-old standard for controlling luminance.

It’s obvious you’ll have to buy a new display at minimum to enjoy the benefits of Dolby Vision and/or HDR. And what we’re seeing so far will be a compromise at best. A zone-dimming LCD can approximate a 12 to 14-stop dynamic range but only full pixel-level control will show the technology at its maximum potential. And when content comes along with HDR included, you’ll need a new Blu-ray player that handles the HEVC codec and is able to output at least a 10-bit signal.

Just as with Dolby Atmos, there is no free lunch. You will have to invest in new components. And it’s likely that the first-generation products won’t include all the features. There are still no consumer displays that can even come close to rendering a Rec. 2020 color gamut. And the 1,000 nits of output proposed by Dolby is also something unlikely to make its way into that new Sony TV mentioned above.

Here is a screen-shot of a video taken with a RED camera and Dolby Vision, of which HDR is part of the algorithms. The screen-shot is from the raw video files, and no processing is done in the camera, so the image looks rather flat in terms of contrast. This particular video was shot from inside a cave. Notice that you can see details on the rock inside the cave, and outside, where the sun is shining brightly, the highlights are properly exposed. Normally, if the exposure was made so that you could see the inside of the cave, the outside would have highlights blown out. If the exposure was made for the outside highlights, the inside of the cave would have been totally dark. Dolby Vision, with its far higher contrast ratio and 21 f/stops of dynamic range, captures deep shadows and bright highlights as well.

When the video files are put into post-production and edited, the shadow detail would have been lost with a conventional camera that didn’t have Dolby Vision. Since this video was shot with Dolby Vision, it can be edited to bring out all shadow details and given proper contrast settings, as shown in the second photo above. When viewed on an HDR monitor (and presumably in a theater), the portion of the scene that was outside would appear very bright on the screen, much brighter than you are viewing it on your computer monitor.

The next time you visit your local electronics dealer, they will likely have one or more of the new HDR flat panel displays, showing master video clips using full HDR. Take a close look, as you will be viewing the near future of Ultra-HD.

Final Thoughts

There’s no doubt that HDR is a good thing. One industry insider I spoke to said, “It’s the best thing since sliced bread.” It’s unfortunate he couldn’t come up with something more original. And this person said he’d rather have HDR at 1080p resolution than standard dynamic range in Ultra HD. Those of you who hold firm to the principles of imaging science know that contrast is king and resolution is less important than color accuracy and saturation. It’s great to see the industry making a committed effort to do something besides add more pixels. Dolby says they are making “better pixels”, and we applaud this. Ultra HD is nice, but what we’ve seen so far has done very little to advance image quality. HDR, however, has the potential to create a revolutionary leap rather than just an incremental one.

Our advice to videophiles is to wait for now. Let’s see what Samsung, Sony, and Vizio come up with before jumping in. And Dolby has yet to firmly lock down its Vision guidelines. Until that happens, we won’t see more than a trickle of new content.

We hope that eventually the floodgates will open, and we’ll see a surge forward towards greater dynamic range with HDR, more color with Rec. 2020 and, OK, we’ll take the extra pixels offered by Ultra HD too. It’s an exciting time in the display business, and we’ll try to bring you as much new information as we can. As always, please stay tuned!

Conclusions

Now that your appetite has been sufficiently stimulated, the question of “When can I have this?” is undoubtedly foremost in your mind. Believe it or not, Dolby Vision is here already. If you attend a screening of Disney’s Tomorrowland or Warner’s San Andreas in a Dolby Cinema-equipped theater (there are only a few), you’ll see the films shown with HDR, 4K resolution, and Dolby Atmos audio thrown in as a bonus.

Warner Brothers has also announced plans to re-master films like Edge of Tomorrow, The Lego Movie, Man of Steel, and Into The Storm in Ultra HD with HDR. While a Blu-ray release seems likely, they are also talking about releasing the content for streaming from Hulu. HDR is coming soon on Amazon, Netflix, and Vudu, which recently announced the addition of Dolby Vision to its streamed content. Fox has announced that, going forward, all its movies will be done in HDR. This all brings up the question of when we might see Dolby Vision in a consumer display.

In April, Sony announced its next-generation XBR-series 4K TV with a new feature called X-Tended Dynamic Range PRO. It claims to have HDR-like contrast enhancement for normal content. The panel has full-array local dimming, so it’s likely the set will offer very high contrast. Whether it matches the 12,000:1 intra-image figure proposed by Dolby remains to be seen.

Dolby’s other major partner is Vizio, and they are talking about displays that accept actual HDR content, which at this, time Sony does not do. Of course, that content is about as rare as the Hope Diamond right now. And Vizio is not saying whether its TVs will add HDR enhancement to regular content. That’s a fairly important issue, and no one has been clear on how that might take shape. Also, Panasonic has added HDR enhancement for regular content to its currently-available AX850 Ultra HD TV.